Two major trends

Trend 1:

Computing means Mobile.

Computing means Mobile.

53% of adults media multi-task while watching TV

Trend 2:

Video is HUGE.

Video is HUGE.

Video will be 80-90% of net traffic by 2017.

Ye olde Flashe vid

<object classid="clsid:d27cdb6e-ae6d-11cf-96b8-444553540000" width="425" height="344"

codebase="http://download.macromedia.com/pub/shockwave/cabs/flash/

swflash.cab#version=6,0,40,0">

<param name="allowFullScreen" value="true" />

<param name="allowscriptaccess" value="always" />

<param name="src" value="http://www.eurgh.com/v/oHg5SJYRHA0&hl=en&fs=1&" />

<param name="allowfullscreen" value="true" />

<embed type="application/x-shockwave-flash" width="425" height="344"

src="http://www.eurgh.com/v/oHg5SJYRHA0&hl=en&fs=1&"

allowscriptaccess="always" allowfullscreen="true">

</embed>

</object>

<video src='chrome.webm' />

Codecs for the modern Web

VP8 and VP9: Open codecs for the web

- VP8 built into devices - including camera chips

- Systems with dedicated VP8 support

- VP9 v H.264: Google I/O

<video>

<source src="chrome.webm" />

<source src="chrome.mp4" />

</video>

<video>

<source src="chrome.webm"

type="video/webm" />

<source src="chrome.mp4"

type="video/mp4" />

</video>

<video poster="images/poster.jpg">

<source src="chrome.webm"

type="video/webm" />

<source src="chrome.mp4"

type="video/mp4" />

</video>

<video poster="images/poster.jpg"

autoplay preload="metadata">

<source src="chrome.webm"

type="video/webm" />

<source src="chrome.mp4"

type="video/mp4" />

</video>

Advanced video features

Alpha transparency

Captions and subtitles

<video poster="images/poster.jpg"

autoplay preload="metadata">

<source src="chrome.webm" type="video/webm" />

<source src="chrome.mp4" type="video/mp4" />

<track src="track.vtt" />

<p>Video element not supported.</p>

</video>

Media Fragments

<video src='chrome.webm#t=5,10' />

Deep linking, deep search

Synchronised metadata

Media Source Extensions

(generating streams from JavaScript)

Adaptive Streaming

EME: Encrypted Media Extensions

Local media input

getUserMedia

It's pretty simple.

var constraints = {video: true};

function successCallback(stream) {

var video = document.querySelector("video");

video.src = window.URL.createObjectURL(stream);

}

function errorCallback(error) {

console.log("navigator.getUserMedia error: ", error);

}

navigator.getUserMedia(constraints, successCallback, errorCallback);

gUM + Canvas

Select resolution

Select mic and camera

gUM screencapture!

Be sure to enable screen capture support in getUserMedia!

var constraints = {

video: {

mandatory: {

chromeMediaSource: 'screen'

}

}

};

navigator.getUserMedia(constraints, gotStream);

WebRTC

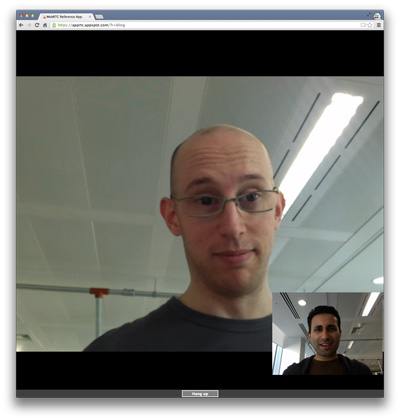

WebRTC across platforms

- Chrome and Chrome for Android

- Firefox and Firefox for Android

- Opera

- Native Java and Objective-C bindings

(example app, API)

1,000,000,000+

WebRTC endpoints

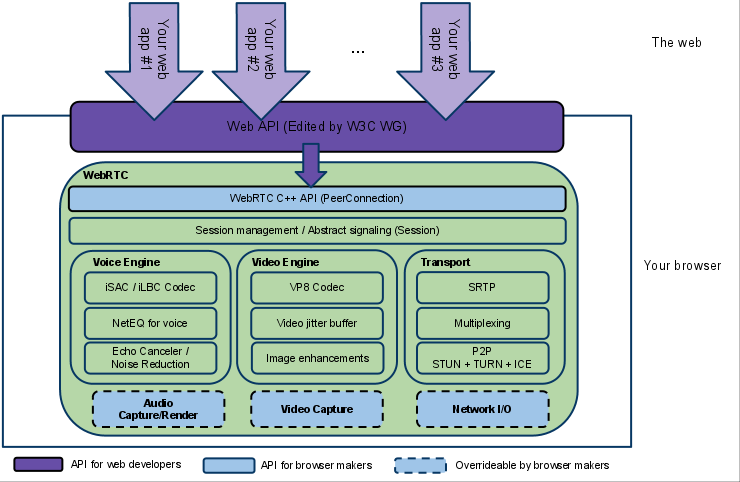

What do we need for RTC?

Four main tasks

- Acquiring audio and video

- Establishing a connection between peers (signaling)

- Communicating audio and video

- Communicating arbitrary data

Three main JavaScript APIs

- MediaStreams (aka getUserMedia)

- RTCPeerConnection

- RTCDataChannel

Communicate Media Streams

→

getUserMedia

+

RTCPeerConnection

←

getUserMedia

+

RTCPeerConnection

←

WebRTC architecture

RTCPeerConnection without signaling

The canonical, full-fat video chat app!

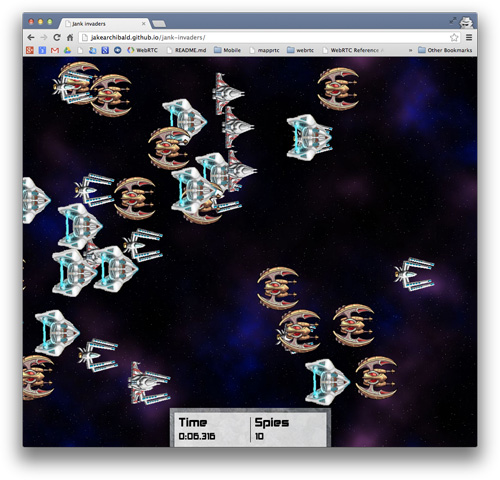

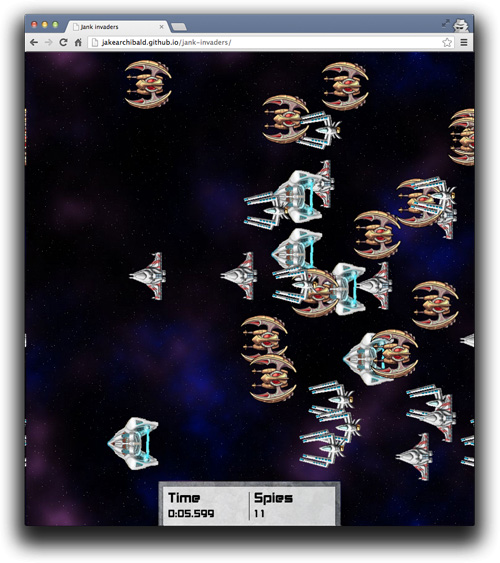

RTCDataChannel

Bidirectional communication of arbitrary data between peers

Communicate arbitrary data

onreceivemessage = handle(data);

...

var myData = [

{

id: "ship1";

x: 24,

y: 11,

velocity: 7

},

....

]

send(myData);

→

RTCDataChannel

+

RTCPeerConnection

←

RTCDataChannel

+

RTCPeerConnection

←

onreceivemessage = handle(data);

...

var myData = [

{

id: "ship7";

x: 19,

y: 4,

velocity: 18

},

....

]

send(myData);

RTCDataChannel

- Same API as WebSockets

- Ultra-low latency

- Optionally unreliable or reliable (UDP)

- Secure

RTCDataChannel without signaling

Audio in the Web Platform

<audio src='chrome.mp3' />

Why Web Audio when we have <audio>?

- Precise timing of multiple overlapping sounds

- Audio pipeline/routing for effects and filters

- Visualize and manipulate audio data

Web Audio can do a LOT...

- Oscillators

- Sequences/rhythms/loops

- Fade-ins/fade-outs/sweeps

- Time-based event scheduling

- Frequency and waveform analysis

- Acoustic environments: reverb, etc.

- Waveshaping (non-linear distortion)

- Dynamics processing (compression)

- Filtering effects: radio, telephone, etc.

- Distance attenuation and sound directionality

- Doppler shift: changing pitch for moving sources

- 3D spatialization: positioning sound at a particular place

Web Audio status

- Chrome desktop and Android — including gUM input

- Safari 6.0+ and iOS6+

- Firefox 25 desktop and Android

- Mic to speaker latency as low as 5ms

More information? Web Audio talk demos

getUserMedia ☞ Web Audio

// Success callback when requesting audio input stream

function gotStream(stream) {

var audioContext = new webkitAudioContext();

// Create an AudioNode from the stream

var mediaStreamSource = audioContext.createMediaStreamSource(stream);

// Connect it to the destination or any other node for processing!

mediaStreamSource.connect(audioContext.destination);

}

navigator.getUserMedia( {audio:true}, gotStream);

gUM + Web Audio + WebGL

gUM ☞ Web Audio ☞ RTCPeerConnection

Capture microphone input and stream it to a peer with processing applied:

navigator.getUserMedia('audio', gotAudio);

function gotAudio(stream) {

var microphone = context.createMediaStreamSource(stream);

var filter = context.createBiquadFilter();

var peer = context.createMediaStreamDestination();

microphone.connect(filter);

filter.connect(peer);

peerConnection.addStream(peer.stream);

}

Web MIDI

- New proposed standard

- Standard MIDI files: not just cheesy background music!

- Connect controllers, synthesizers and more

- Implemented in Chrome behind a flag - Mac, Windows, Linux, ChromeOS and Android!

Synths and drum machines

</talk>

Thank You!

Slides: goo.gl/lzhB1Y